Research Impact. NSF-funded work with Boston University's Digital Business Institute and MIT's Empirica platform.

THE PROBLEM

In real online marketplaces, misleading product claims can be profitable, and buyers usually can't tell what's true until after they've paid. Reputation systems and after-the-fact moderation rarely keep up. We ask a practical question: can we change the rules of the market so that honest selling becomes the most rewarding strategy, without needing heavy moderation?

THE MECHANISM

Truth warrants let sellers escrow money to back the claims in their advertisements. Honest sellers signal credibility cheaply, and dishonest sellers pay buyers when caught.

- 1Seller advertises a claim. e.g. "this product has feature X" or "high quality guaranteed".

- 2Seller posts a warrant. Optionally escrows money to back the specific claim.

- 3Buyer can challenge. If the claim is false the buyer collects the escrow. No central arbiter required.

OUR APPROACH

- Human vs Human. Real people act as both buyers and sellers inside a controlled marketplace.

- Human vs AI. Real buyers transact with LLM-driven sellers running different advertising strategies.

- AI vs AI. Large-scale simulations of agentic seller economies stress-test market rules at machine speed.

- 100+ controlled experiments compare market designs head to head, so we reason from evidence rather than from intuition.

THE RESULT

- A reusable marketplace testbed for advertising and trust research with both human and AI sellers.

- Clear evidence that changing market rules shifts seller behavior and improves buyer outcomes, while moderation alone rarely does.

- Findings presented at Harvard, MIT, Google, Yale, Columbia, Stanford, and IC2S2.

How the marketplace plays

Two short recordings from a live experiment. Sellers on the left decide what to claim about their product and whether to back it. Buyers on the right decide who to trust.

SELLER GAMEPLAY

Sellers post listings, pick a quality level, set a price, and choose whether to put money on their claim through a truth warrant.

BUYER GAMEPLAY

Buyers compare sellers, make a purchase, and can challenge a misleading claim to collect the seller's warrant.

What happens when the sellers are AI agents?

We instrumented our marketplace so that LLM-driven sellers compete against each other and against human buyers. Without any intervention, the agents escalate misleading advertising and shrink consumer surplus. A truth-warrant rule reverses both effects, and raises the profits of honest agents in the process.

LLM seller reasoning, production, and sales under warranty rules

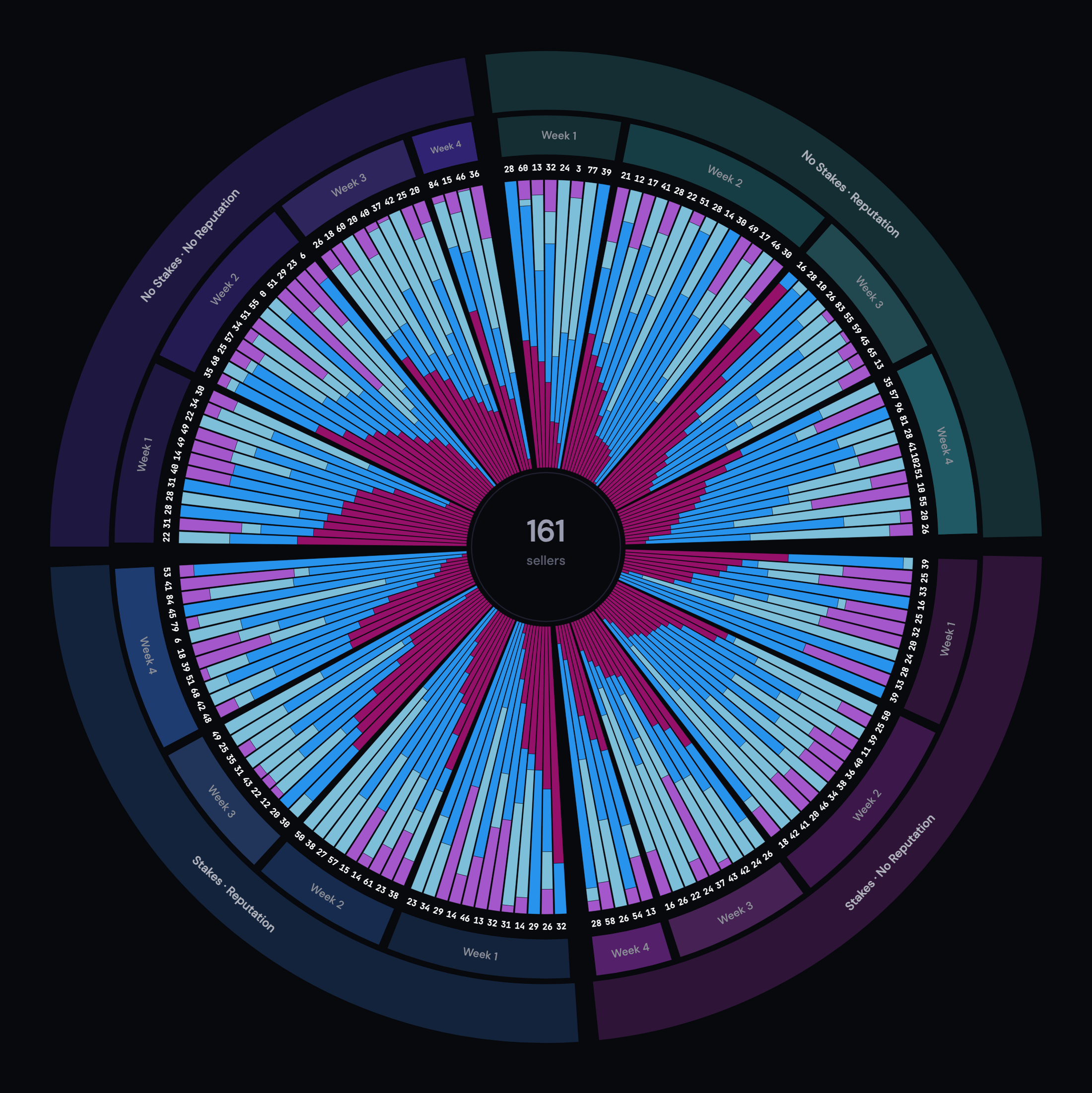

How 161 human sellers think across four markets

An interactive D3 sunburst by our graduate researcher Harshaveena Komatineni maps every seller's strategy mix over four weeks of play, with hover tooltips that surface their own exit-survey words.

Support & Partners

This work runs on an NSF award, institutional support from Boston University, and technology built at MIT.